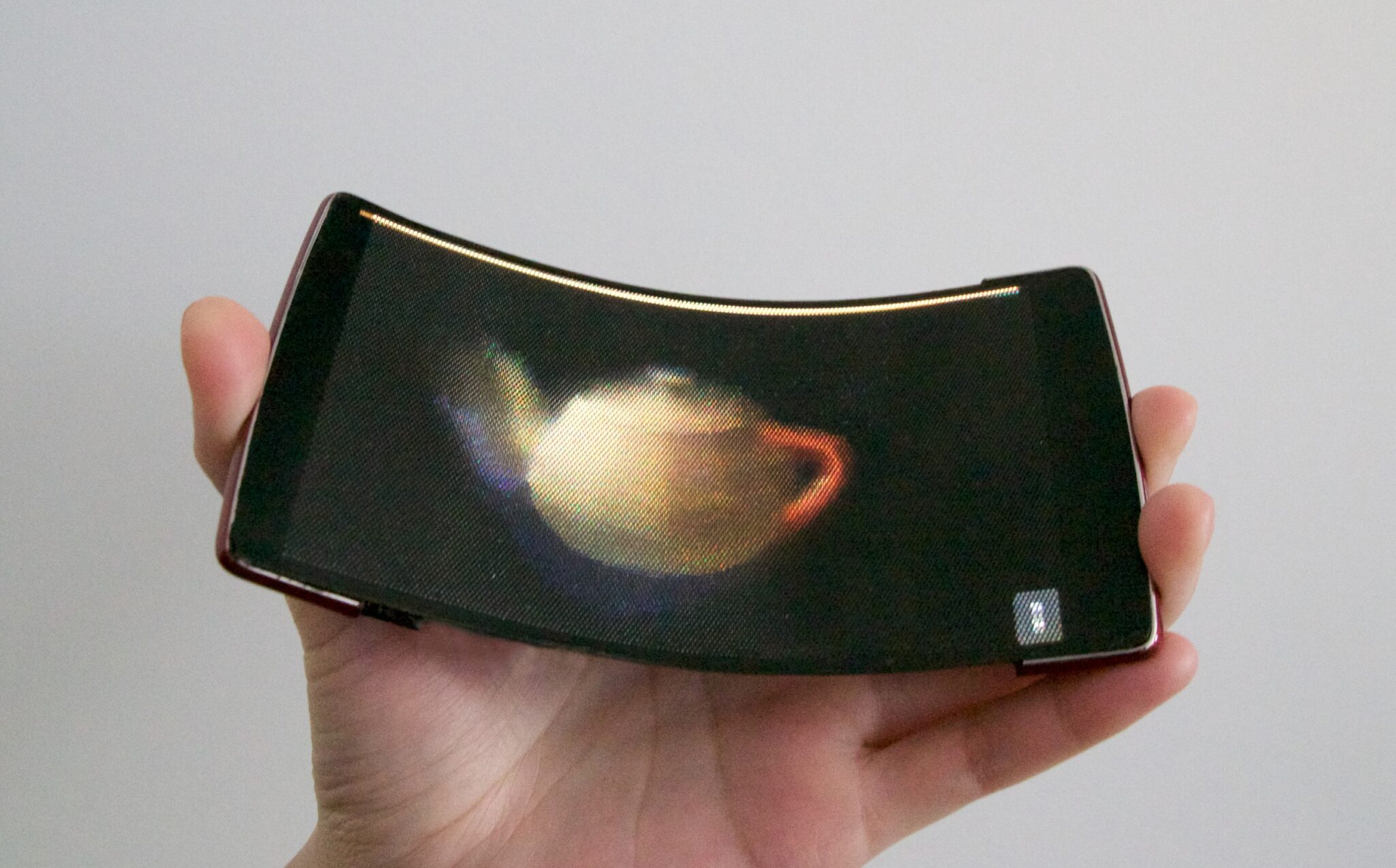

HoloFlex: A Flexible Smartphone with a Faux Holographic Display

The Holoflex is a prototype Android smartphone which runs Android 5.1 Lollipop on a 1.5 GHz Qualcomm Snapdragon 810 CPU with 2GB of RAM and a dedicated GPU.

It features a bendable, 1920 x 1080 resolution FOLED screen which is capable of projecting glasses-free 3d images to multiple users at the same time.

The Holoflex pulls off that trick thanks to a 3d-printed fisheye lense filter which is mounted on the display. That filter is made up of an array of over 16,000 individual fisheye lenses, each 12 pixels across, and it effectively turns the screen into a 160 x 104 resolution display which allows users to inspect a 3D object from any angle simply by rotating the phone. (Each lens in the array can be regarded as a single pixel in the filter.)

You can see the screen in action in this video:

Does anyone have a clue why this would be useful? I can’t see a use, myself.

3D modeling on a computer screen is an incredibly useful tool for visualization and design. When the bugs are worked out of VR headsets like the Oculus Rift and the smartphone-based units like Google Cardboard, it’s going to be useful there as well.

But this strikes me more as a gimmick than a useful tool. The resolution is too low, and the interface too quirky, for the Holoflex to be all that useful.

To put it simply, the Holoflex is hampered by the Human Media Lab’s ongoing obsession with flexing a device as an input method. We’ve seen it before in their previous prototypes. Sometimes it works, but in the case of the Holoflex it’s just a pointless gimmick.

An interface based on a 3-finger manipulation of the image would be more useful, practical, and functional, wouldn’t you agree?

Queens University via Spectrum

Comments

NatCh May 9, 2016 um 12:45 pm

In this iteration, I’d agree that this is little more than a gimmick. However, you never know which gimmicky ideas are going to turn out to be a really big deal. At one point computers were little more than a gimmick. I’ve still got an old Nixdorf "pocket computer" around here somewhere (http://www.computermuseum.li/Testpage/NixdorfPocketComputer.htm). That thing was so useless at the time it came out that my parents gave it to their pre-teen boys to bang around with. That thing was unbelievably useless. It did have a nifty nixie-like display module, as I recall … maybe I should dig it up and see if I can re-purpose the display to something interesting.

Anyway, back to my point, resolutions can be increased, interfaces can be refined. As a lab prototype, it’s quite an interesting concept, whether it ever goes anywhere or not. :shrug:

Nate Hoffelder May 11, 2016 um 9:35 am

Computers may have been gimmicks in the time of Babbage, but that was mainly because he was centuries ahead of his time and the available tech. Computers stopped being gimmicks right around the time that pre-IBM got its first contracts with the US Census Bureau for what we would now know as data processing. They have been serious and legit tech since. (Some specific devices may have been gimmicks but not the entire field.)

NatCh May 11, 2016 um 10:18 am

Indeed, but the essence of my point is that gimmicks are gimmicks, until they’re not, and it’s tough to tell which ones are going to make that transition. Of course, the vast majority of them don’t, so the assumption that any *given* gimmick won’t *is* a pretty safe one. 🙂

Brian May 12, 2016 um 4:43 am

I think the bending screen for a user interface will remain a gimmick.

I am on the fence about the microdot lightfield display technology. Nvidia has demoed 3D glasses using this style of display that were also really low resolution. Here is a video at a trade show in 2013 where Nvidia was showing off these glasses and talking about them:

I think the video does a good job of listing the upsides. The downside of this style of lightfield display is it requires a lot of pixels. Using the articles display for the pixel ratio: Full HD (1920×1080) => 160×104, 4K (3840×2160) => 320×208, 8K (7680×4320) => 640×416, 16K (15360×8640) => 1280×832. So it may be a while before it becomes feasible to do a decent resolution display.